Unifying the Senses: NVIDIA’s Multimodal Model Accelerates Real-Time Physical AI

NVIDIA's new Nemotron 3 Nano Omni model unifies vision, audio, and language into a single architecture, significantly reducing latency and context loss for real-time AI agents.

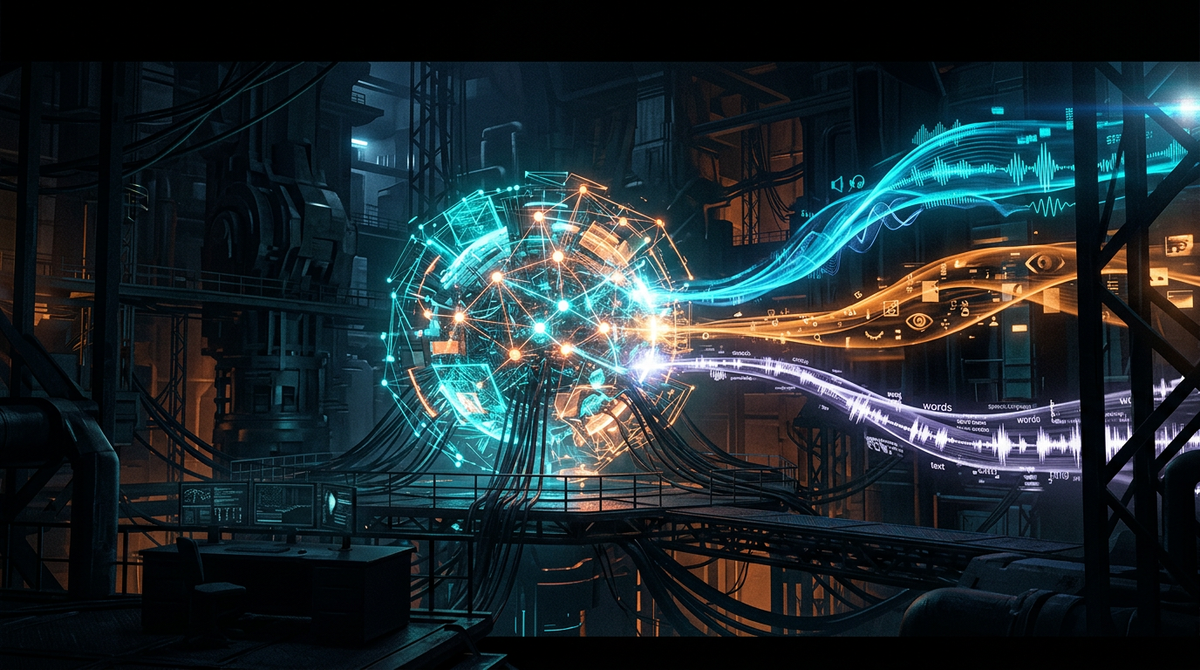

The paradigm of Physical AI is shifting from fragmented, multi-model pipelines to unified, multimodal architectures. NVIDIA’s launch of the Nemotron 3 Nano Omni model marks a significant milestone in this evolution. Historically, AI agents interacting with the physical world had to "daisy-chain" separate models: one for vision to perceive the environment, one for audio to hear commands, and a third for language to process logic. This transfer of data between disparate systems created "semantic friction"—a loss of temporal context and a noticeable lag that hindered fluid interaction.

The Nemotron 3 Nano Omni addresses this by integrating these modalities into a single, compact model. By unifying vision, audio, and language, the system achieves up to nine times more efficiency than its predecessors. For Physical AI applications, such as factory floor assistants or assistive service robots, this means the ability to perceive a human’s gesture and spoken tone simultaneously, responding in real-time without the "thinking" pauses that break human-robot collaboration.

This efficiency isn't just about speed; it's about deployment. The "Nano" designation signals that this model is optimized for edge computing. In the world of Physical AI, the ability to process complex multimodal data locally on a device—rather than sending it to the cloud—is critical for safety, privacy, and reliability in environments where connectivity is intermittent.

Source: NVIDIA Blog