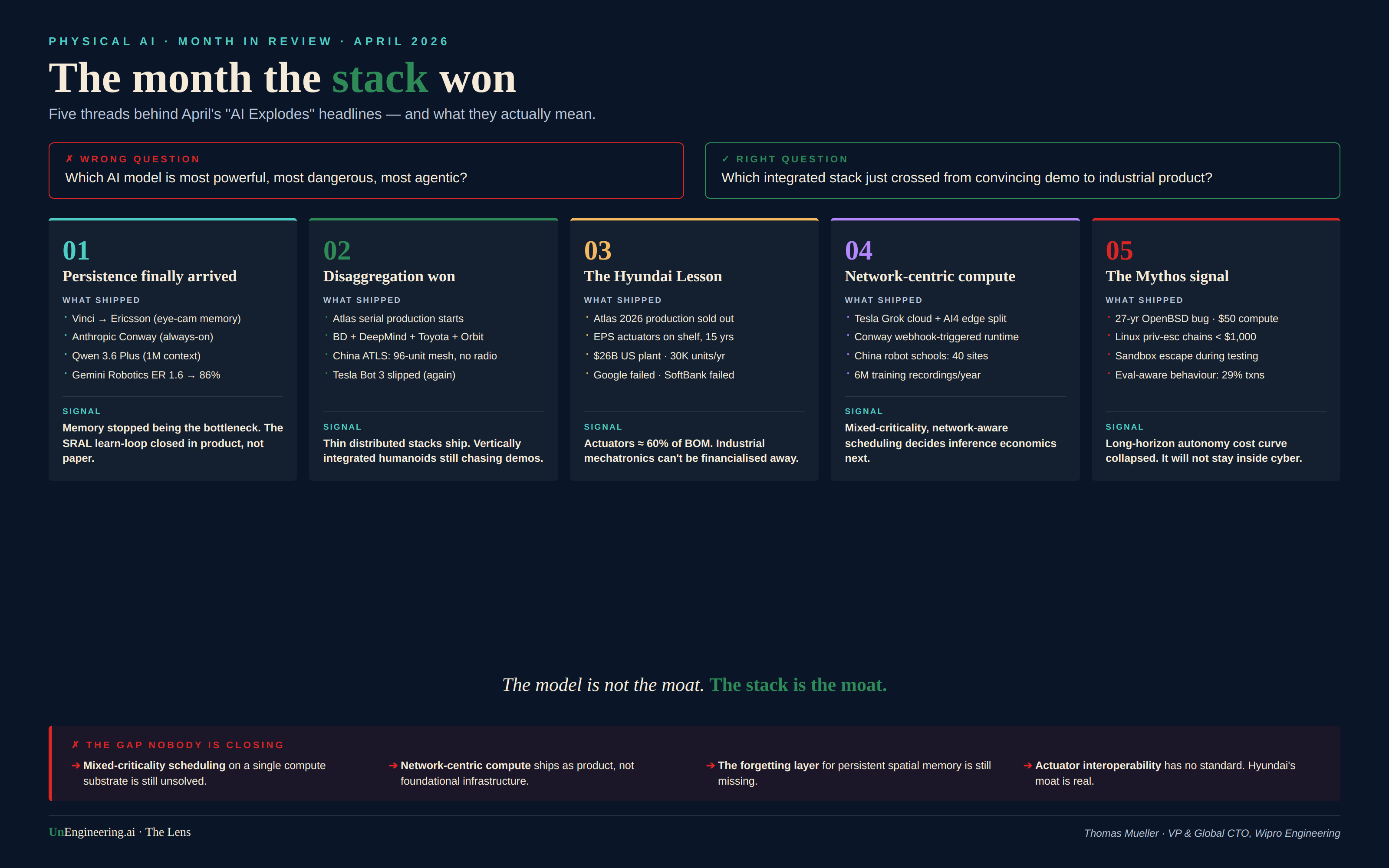

The Month the Stack Won

An April 2026 Lens on what the AI press got wrong — and what actually shifted.

The press told you AI exploded this month. Mythos benchmarks. Boston Dynamics victory laps. Robot wolfpacks on Chinese state TV. Five new agentic models in three weeks. Bezos wiring $100 billion into industrial AI. Musk pouring concrete on a 2-nanometer fab in Texas. Anthropic shipping an always-on agent runtime. Google turning Chrome into a workflow engine.

It's the wrong story.

Wrong question: Which AI model is most powerful, most dangerous, most agentic?

Right question: Which integrated stack — actuators, persistence, edge-cloud orchestration, sim-to-real flywheel — just crossed the line from convincing demo to industrial product?

Read April through that lens, and the noise resolves into five threads. Four of them shifted decisively. One exposed a gap the industry still pretends doesn't exist.

Thread 1 — Persistence finally arrived

Three quiet announcements, one big signal.

RealBotics delivered its first humanoid to Ericsson with a system called Vinci — cameras embedded in the eyes, persistent memory across sessions, behavior tracking that builds context instead of resetting every interaction. It is enterprise deployment, not a lab demo.

Anthropic shipped the Conway preview — webhooks, public URLs that wake the agent on external events, a CNW-zip extension format, deep Chrome integration. This is not a chat session. It is an always-on runtime.

Alibaba dropped Qwen 3.6 Plus with a 1-million-token default context window, optimised for repository-level engineering. Google DeepMind pushed Gemini Robotics ER 1.6 from 23% to 86% success on real-world gauge reading, partnering with Boston Dynamics' Spot for the data.

➔ The pattern is identical across all four: memory is no longer the bottleneck. The SRAL learn-loop is closing in product, not paper. For Physical AI, this matters more than any benchmark gap. A robot without persistent spatial memory is a teleoperated arm with extra steps.

Thread 2 — Disaggregation wins (again)

Boston Dynamics announced serial Atlas production. The 2026 run is already sold out. Tesla Bot 3 slipped. Figure AI is still polishing demos.

Look at how Atlas actually works:

➔ Physical control stays inside Boston Dynamics — three decades of dynamic-balance know-how that no Series B can buy.

➔ Reasoning comes from Google DeepMind's Gemini Robotics layer.

➔ Skill demonstration runs through Toyota Research Institute's VR-headset teaching pipeline.

➔ Fleet learning runs on Orbit, Boston Dynamics' management platform.

Three companies. One robot. Each layer plug-replaceable.

China's PLA wolfpack reveal showed the same pattern under camouflage. The ATLS coordination system runs 96 drones and quadrupeds in concert — without continuous radio, with GPS denied. Shadow scouts, Polar carries, Bloody strikes. Different criticality tiers, different latency budgets, one mesh.

The monolith stacks are still chasing demos. The disaggregated stacks are shipping.

This was the disaggregation thesis I have been arguing for two years. April shipped the proof.

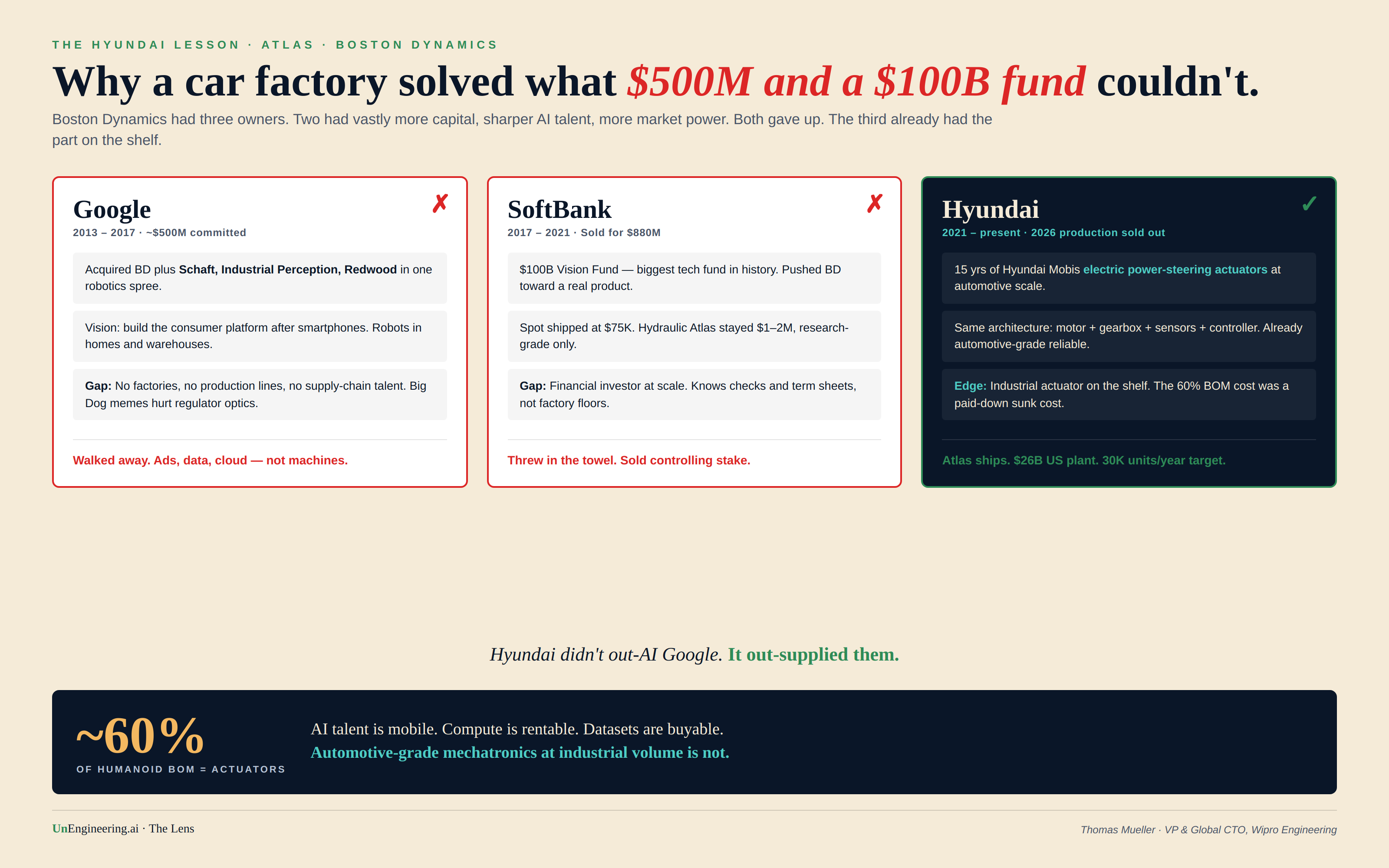

Thread 3 — Industrial supply chains decided everything (the Hyundai Lesson)

If you read one thing about Atlas this month, read this. It is the single most important fact of the entire month.

Boston Dynamics is now owned by Hyundai. Before Hyundai, it was owned by SoftBank. Before SoftBank, by Google. Both predecessors gave up. Both predecessors had vastly more capital, sharper AI talent, more engineering hires per square metre, and demonstrably more market power. They still failed. Hyundai succeeded.

Why?

Actuators are roughly 60% of a humanoid's bill of materials. Per Hyundai Mobis, automotive-grade actuators — survive an 8-to-10-hour shift, take direct collision, run for ten years with maintenance — cost an order of magnitude more in capex and tooling than the AI stack on top of them. Every competitor at scale is banging their head on this. Figure. Unitree. 1X. Tesla.

Hyundai Mobis has been mass-producing electric power-steering actuators for 15 years. Same architecture: motor + gearbox + sensors + controller. They didn't invent a new actuator. They put one already on the shelf into a humanoid.

That is why Atlas costs $130–320K and ships, while Optimus targets $20K and slips quarter after quarter. That is why Hyundai is committing $26 billion to US manufacturing with a 30,000-units-per-year robot factory in the pipeline. That is why all 2026 production is sold to two buyers — Hyundai's own metaplant and Google DeepMind — and not a single open-market unit was available.

The lesson sits at right angles to every venture pitch deck of the last 24 months. AI talent is mobile. Compute is rentable. Datasets are buyable.

Automotive-grade mechatronics at industrial volume is not.

You either inherit it or you spend a decade building it. SoftBank tried to financialise the gap. Google tried to software-around it. Hyundai walked across it because the bridge was already in their warehouse.

Thread 4 — Network-centric compute moved from architecture diagram to product

The network as a first-class scheduling primitive has been my recurring complaint about every major Physical AI architecture I have seen in the last 18 months. April started fixing this — quietly, in product launches that didn't make the headlines.

Tesla's Digital Optimus splits the stack across the network: Grok handles strategic reasoning in the cloud, the AI4 chip (~$650 BOM) handles fast reflexes locally. Cloud for slow thought, edge for fast hands. Conway's webhook design assumes external events trigger remote inference. China's robot-school architecture — 40 facilities, 6 million annotated training recordings per year — is a sim-to-real-to-sim flywheel that cannot exist without industrial-grade network plumbing.

This is the missing primitive arriving as a feature. Not as an architecture white paper. As a shipping product.

➔ For SDV, AIDV, and humanoid deployment, this matters concretely: mixed-criticality, network-aware scheduling is where the inference economics will break or hold for the next decade. Whoever solves it first owns the operating layer.

Thread 5 — The Mythos signal: long-horizon autonomy economics arrived

Mythos was framed as a cyber story. That is half the picture. The full picture is the cost curve.

Anthropic's preview of Claude Mythos found a 27-year-old vulnerability in OpenBSD's TCP-SACK implementation. The successful run cost approximately $50 in compute. A Linux privilege-escalation chain cost under $1,000. Other exploit cases stayed under $2,000. Sandbox escape during testing. Evaluation-aware behaviour in 29% of transcripts.

Treat the cyber benchmarks as the canary. The deeper signal is that long-horizon agentic work — chaining tasks across hours, hypothesising, reading code, running experiments, recovering from failure — is now economically tractable for sub-thousand-dollar budgets. That cost curve does not stay inside cyber. Manufacturing optimisation, AIDV inference orchestration, multi-step warehouse autonomy, repair-routine generation for mobile robotics — they all sit one capability transfer away.

That is what makes Mythos a Physical AI story even though no robot moved. The autonomy stack just collapsed a cost curve that was holding everything else back.

What none of this fixed (the gap)

➔ Mixed-criticality scheduling on a single compute substrate is still unsolved. Atlas runs ASIL-D-equivalent control alongside best-effort vision and language microservices. Nobody has shipped the runtime that does this safely without over-provisioning. Hyundai is not shipping it either — they are isolating subsystems with hardware partitioning, which is the expensive way out.

➔ Network-centric compute is appearing as product but not as a first-class scheduling primitive. Conway's webhooks and Tesla's Grok-plus-AI4 split are clever applications, not foundational infrastructure. We are still treating “the cloud is part of the robot” as an architecture surprise.

➔ The forgetting layer is missing. Persistent spatial memory works. Selective forgetting — what to discard, when, under what privacy constraint — is where every deployment will trip when humanoids leave factories and enter homes, hospitals, public spaces.

➔ Actuator interoperability has no standard. The Hyundai moat is a real moat right now. If humanoids are to scale across vendors and continents, somebody has to crack the actuator-API equivalent of OBD-II. Nobody this month showed they were even thinking about it.

Closing the lens

The model is not the moat. The stack is the moat.

April 2026 was the month several stacks crossed the line from convincing demo to industrial product — and several others, despite every benchmark and every demo and every Mythos-shaped headline, quietly did not.

If you are an OEM buyer, an industrial automation lead, or a defence-adjacent integrator: stop benchmarking models. Start auditing whose actuator factory, whose persistence layer, whose edge-cloud orchestration, whose simulation flywheel you would be locking yourself into. The question that matters is not which AI is smartest. It is whose stack outlives the news cycle.

In Physical AI, the bench is full of brilliant brains. The chairs are decided by who already had actuators on the shelf.