Reasoning in Motion: How LLMs are Giving Robots a 'Brain'

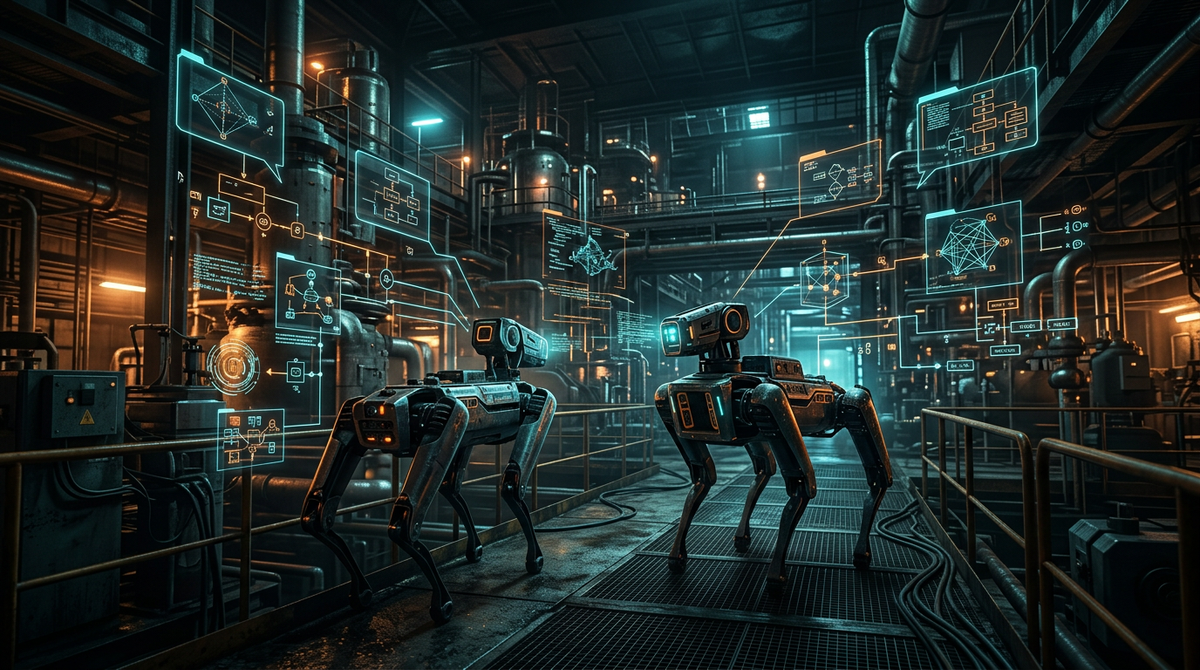

NVIDIA and Google DeepMind have successfully integrated Large Language Models with Boston Dynamics' Spot, enabling the quadruped to reason through complex environments. This marks a shift from rigid coding to natural language interaction for robotic navigation.

The field of robotics is undergoing a fundamental shift as "foundational models"—the brains behind generative AI—are integrated into physical machines. In a landmark collaboration, Boston Dynamics and Google DeepMind have demonstrated how the Spot quadruped robot can now 'reason' through its environment using Visual Language Models (VLMs).

Historically, programming a robot to perform a task required exhaustive, line-by-line coding of every potential movement and sensor input. If the robot encountered an obstacle not defined in its code, it would typically fail. The new approach allows Spot to take high-level instructions—such as "find the leaking pipe and notify the supervisor"—and decompose that request into a series of logical steps based on its real-time visual feed.

This integration allows for semantic navigation, where the robot understands the context of what it sees. It no longer just sees a 'geometric obstruction'; it recognizes a 'locked door' and can autonomously decide to find an alternate route. By combining natural language processing with physical actuation, NVIDIA and its partners are bridging the gap between digital intelligence and physical work, a core pillar of the emergent Physical AI sector. This technology is expected to revolutionize industrial inspections and search-and-rescue operations where environments are unpredictable and high-stakes.

Source: IEEE Spectrum