Bridging the Reality Gap: How Virtual Worlds are Forging Physical AI

NVIDIA is pivoting toward the 'Physical AI' era by integrating its Omniverse simulation platform with real-world robotics. This synergy allows for the training of autonomous agents in photorealistic, physics-accurate virtual environments before deployment into the physical world.

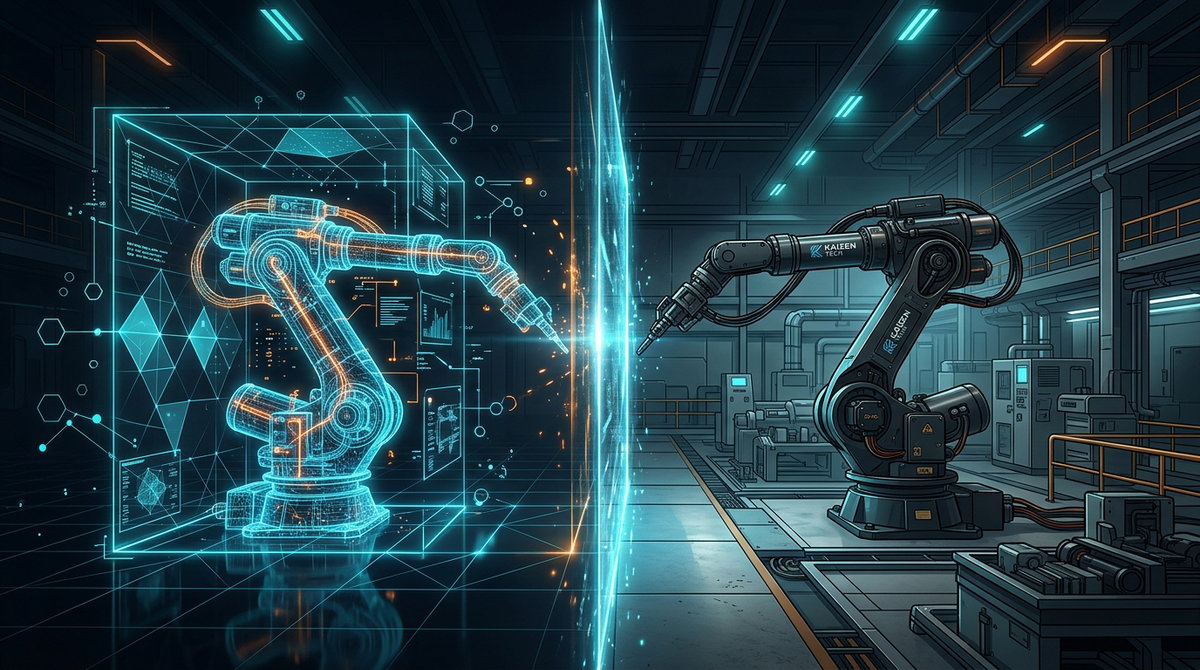

The dawn of Physical AI is being accelerated by the intersection of high-fidelity simulation and generative models. NVIDIA’s recent focus at GTC showcases how the Omniverse platform is no longer just a visualization tool but a foundational training ground for the next generation of autonomous machines. By creating 'digital twins' of complex environments, developers can subject AI agents to millions of iterations in a virtual space that mirrors the laws of physics, drastically reducing the time and risk associated with real-world testing.

This 'simulation-to-reality' pipeline is critical for Physical AI, where the stakes of a software error are physical collisions or hardware damage. NVIDIA is pushing the boundaries by integrating Large Multimodal Models (LMMs) directly into these workflows, allowing robots to understand natural language instructions and translate them into physical actions within the simulation. This creates a feedback loop where the AI learns to perceive, reason, and act in a continuous cycle.

Enterprises are already leveraging these virtual worlds to optimize factory floors and logistics hubs. By simulating every sensor, motor, and gear, engineers can identify bottlenecks and safety hazards before a single piece of hardware is even installed. As we move closer to a world of pervasive autonomous systems, the ability to 'pre-train' the physical world in software will be the defining competitive advantage.

Source: NVIDIA Blogs