AI-Defined Vehicles: The Industry’s Newest Acronym Deserves a Harder Question

Most vehicles can’t even run a container. Calling them AI-defined is premature.

At Mobile World Congress Barcelona in March 2026, LG Electronics unveiled a next-generation smart telematics solution—a combined TCU-antenna module integrating 5G, GPS, V2X, and satellite communications into a single unit. The engineering is solid: consolidating antenna and telematics control reduces signal loss, eliminates the shark-fin antenna, and simplifies wiring harnesses. With a reported 23 percent share of the global telematics market, LG has earned the right to make bold claims about where automotive connectivity is heading.

But the press release carried a claim that warrants far more scrutiny than the product itself: the automotive industry is transitioning from Software-Defined Vehicles to AI-Defined Vehicles. LG’s Vehicle Solution president framed in-vehicle connectivity as the path that leads beyond the SDV paradigm and into the AIDV era.

LG is not planting this flag alone. At CES 2026, Qualcomm launched Snapdragon Chassis Agents—agentic AI frameworks running on-device across cockpit, ADAS, telematics, and V2X domains. LG Innotek showcased an autonomous driving mock-up explicitly labeled AIDV. Sonatus demonstrated its AI Director platform deploying edge AI models on a 2026 Nissan LEAF and a Kenworth truck. A Frontiers in Robotics and AI paper formalized AIDV as a distinct vehicle class with its own reference architecture. Hyundai launched Pleos as an SDV software brand. ZF, Elektrobit, Vector, and AUMOVIO all demonstrated AIDV-adjacent capabilities. There is even a dedicated European conference track—SDV & AIDV Week—treating both as co-equal paradigms.

So the question practitioners should be asking is direct: does AIDV represent a genuine architectural discontinuity—or is it a marketing repackaging of capabilities that were always the endgame of the SDV thesis?

The AIDV Proposition

The core claim is that in an SDV, software abstracts hardware and enables OTA updates, but the driver remains the primary decision-maker. You select the driving mode, activate lane-keeping, choose the route. The vehicle is programmable but fundamentally reactive. In an AIDV, AI becomes the primary orchestration layer. The vehicle continuously interprets context—weather, traffic, biometric signals, time of day, historical preferences—and adapts proactively. Suspension damping, energy management, cabin ambience, driving style—all adjusted autonomously, without user input. One commentator described it as the shift from a vehicle where AI is a feature to one where AI runs everything.

Qualcomm’s framing is more architectural. Their Chassis Agents implement compound AI as distributed agentic systems connecting to domain-specific Model Context Protocol servers for perception, planning, and actuation. The dual Snapdragon Elite platform—combining Oryon CPU, Adreno GPU, and Hexagon NPU in parallel—can run a full-modality large AI model for the cockpit and a vision-language-action model for ADAS simultaneously on a single controller. Google’s collaboration with Qualcomm will layer Gemini Enterprise for automotive on top, with the stated aim of proactive voice-driven AI assistants that anticipate driver needs.

Sonatus’s co-founder Yu Fang offered perhaps the most honest framing in the industry: “SDV is the infrastructure; AI is the brain. Without SDV’s capabilities in data acquisition, governance, and execution, AI cannot be deployed at the vehicle edge.” That single sentence exposes the fundamental tension in the AIDV narrative. Frost & Sullivan projects in-vehicle AI to grow from $43 billion in 2025 to $238 billion by 2030. The money is real. The question is whether the architectural foundation exists to absorb it.

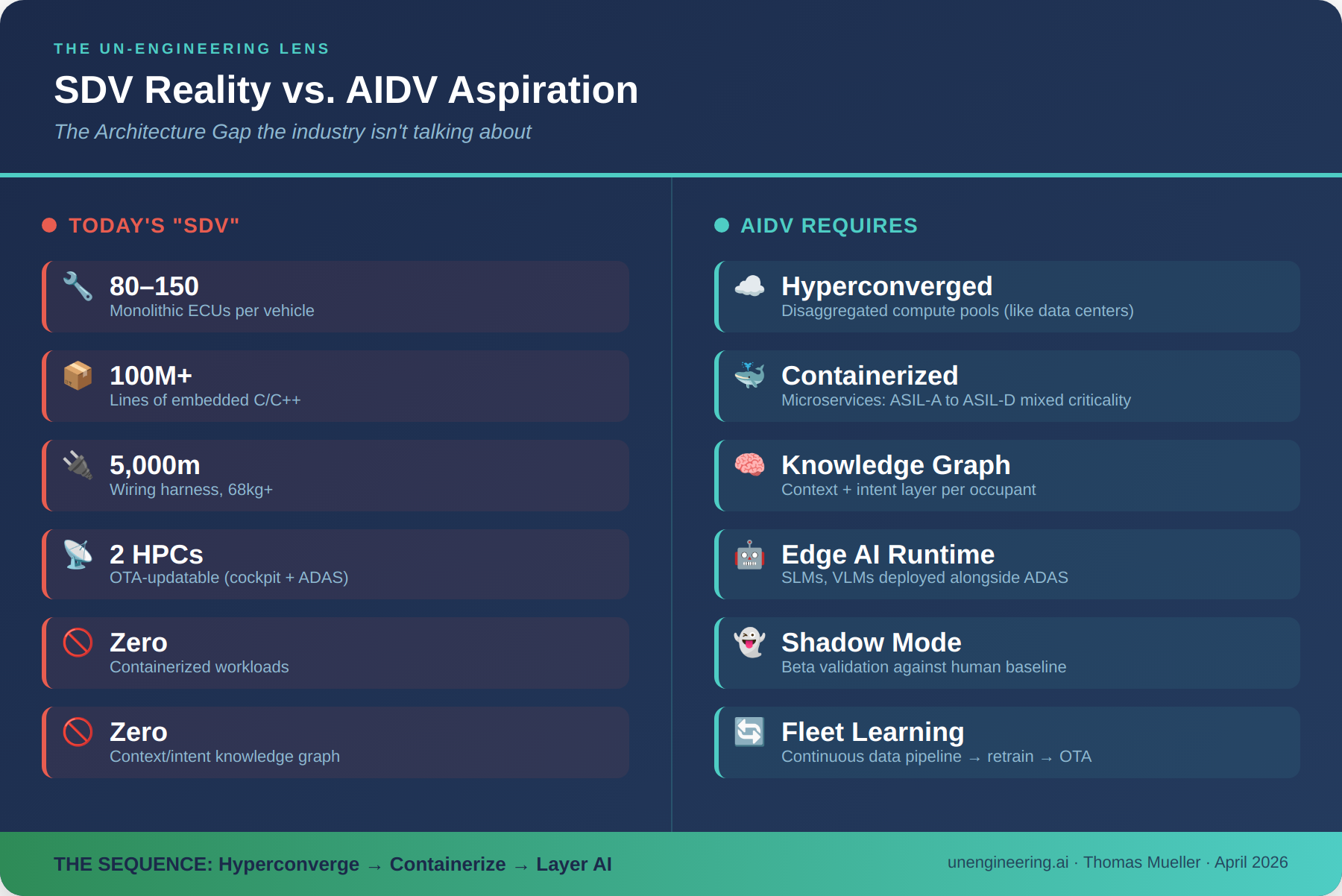

The Uncomfortable Reality: Most “SDVs” Aren’t

Here is where the practitioner’s lens gets uncomfortable. Before debating whether vehicles should be AI-defined, we need to confront the fact that most vehicles marketed as software-defined are not even that. Today’s typical “SDV” means an OTA solution covering two main HPCs—cockpit and ADAS—and perhaps a handful of additional ECUs. The vehicle still runs 80 to 150 embedded ECUs, each executing monolithic C/C++ code on dedicated microcontrollers, networked across CAN, CAN FD, LIN, and legacy buses. Over 100 million lines of safety-critical code. More than 5,000 meters of wiring harness weighing upward of 68 kilograms.

This is not a software-defined architecture. It is a hardware-defined architecture with a software-updatable cockpit bolted on top. Calling it SDV is generous. Calling its successor AIDV is aspirational to the point of fiction.

What a True AI-Capable Vehicle Architecture Requires

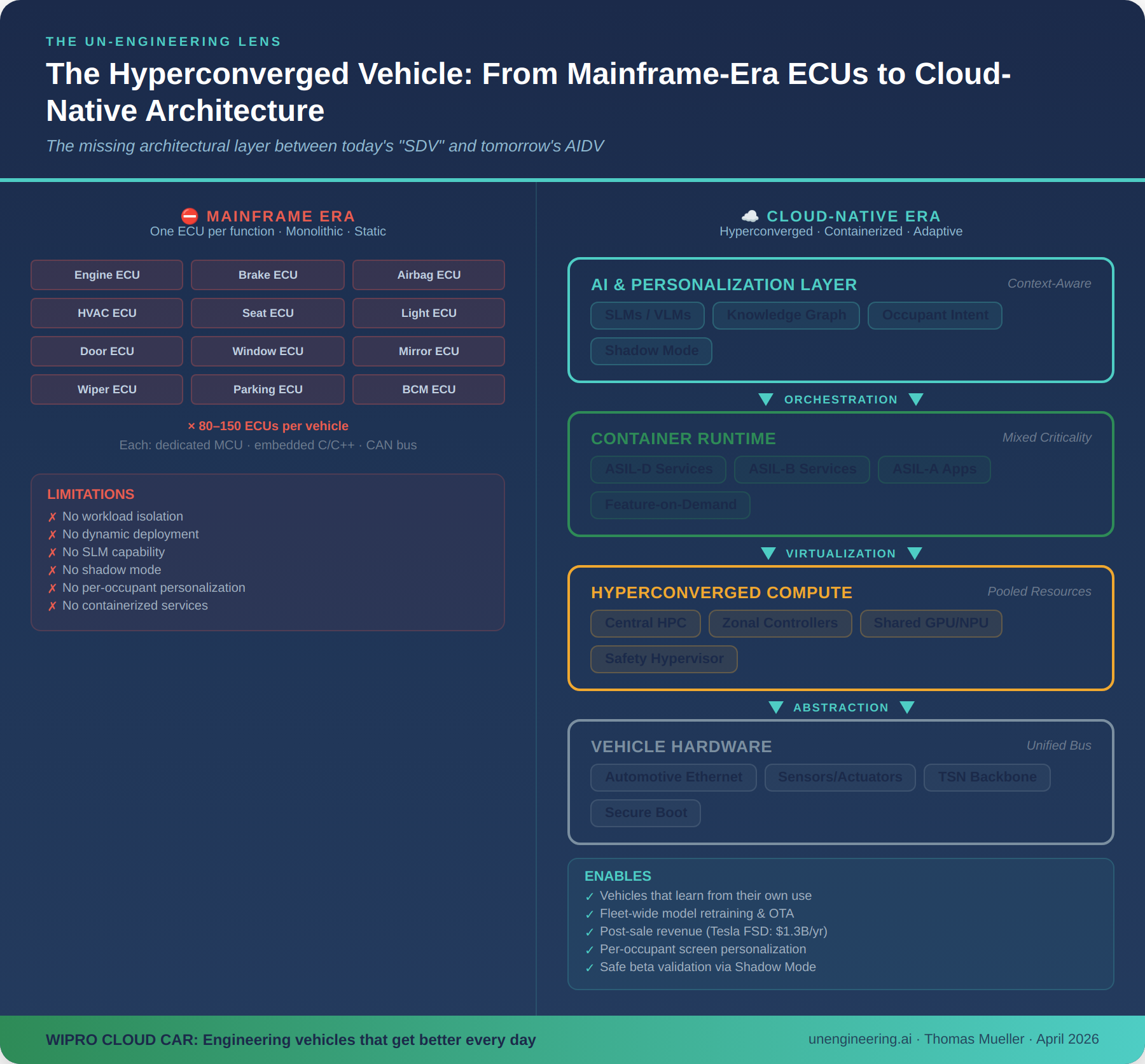

With Wipro Cloud Car, my team and I set out to address this gap at the architectural level. The core insight was borrowed from data center design: if hyperconverged infrastructure can disaggregate compute, storage, and networking into software-managed pools in a data center, the same principle applies to vehicle E/E architecture. We proposed running vehicle workloads as containerized microservices with mixed criticalities ranging from ASIL-A through ASIL-D on a shared hyperconverged compute platform—replacing the one-ECU-per-function model with a virtualized, orchestrated architecture.

This is not incremental. It is the difference between a mainframe-era computing model—where every application gets its own dedicated machine—and a cloud-native model where workloads run as containers on shared infrastructure. The automotive industry is still, architecturally speaking, in its mainframe era.

A fundamental flaw in today’s vehicle architecture is the absence of a context and intent layer. No production vehicle today maintains a stretched knowledge graph connecting the individual—their context, preferences, intent, and behavioral patterns—to the vehicle’s exposed services across infotainment, navigation, entertainment, climate, and driving dynamics. Without this, the vehicle cannot reason about what the occupant needs. It can only react to explicit inputs.

Consider the digital screen estate in a modern premium vehicle: center display, instrument cluster, head-up display, passenger screen, rear-seat entertainment. In most vehicles, the passenger screen offers only redundant information—the same map, the same media controls. It lacks fundamental personalization through something as basic as an individual login. Passengers are objectively better served pulling out their fully hyperpersonalized smartphones to browse, scroll, and stream. The vehicle’s “smart” surfaces are, in practice, dumb terminals.

Containerization: The Real Gating Factor

The reason vehicles cannot easily run small language models, deploy edge AI agents, or offer genuinely personalized services is not a silicon problem—Qualcomm’s Snapdragon Elite 8797P with 1280TOPS per SoC provides ample compute. The problem is that the vehicle’s onboard platform lacks containerization. Without container orchestration, you cannot dynamically deploy, update, or isolate AI workloads. You cannot run an SLM alongside an ADAS pipeline without risking safety-critical interference. You cannot offer features-on-demand that are more substantive than infotainment widgets.

This matters enormously for monetization. Tesla’s Full Self-Driving remains the only significant post-sale software revenue stream in the industry. Tesla disclosed 1.1 million active FSD users by end of 2025 and shifted to subscription-only pricing in February 2026 at $99 per month—building recurring revenue from an installed base generating over $1.3 billion annually. Why is Tesla alone here? Because FSD learns from its own use. It improves through fleet-wide data collection and continuous model retraining. The vehicle gets better every day. No other OEM has achieved this because no other OEM has built the end-to-end architecture—from data pipeline through training through OTA deployment—that makes it possible.

Shadow Mode: The Missing Capability

One of the most powerful concepts in the Cloud Car program was Shadow Mode—a capability that, in a hyperconverged architecture, is simply another virtual machine on shared infrastructure. Shadow Mode runs beta software in parallel with production systems, executing candidate algorithms against real-world driving data and validating them against the human baseline—without impacting safety or performance. It is the automotive equivalent of canary deployments in cloud infrastructure.

Shadow Mode is precisely the mechanism that enables a vehicle to learn from its own use—the capability that makes Tesla’s FSD architecture economically self-reinforcing. Without containerized, mixed-criticality compute that can safely isolate experimental workloads from production ASIL-D systems, Shadow Mode is impossible. And without Shadow Mode or its equivalent, the AIDV vision of continuously adaptive, self-improving vehicles remains marketing collateral. The principle is straightforward: if you can run workloads as isolated containers on a shared hypervisor, shadow validation becomes just another orchestration policy. If you cannot—and today’s monolithic ECU architectures cannot—then every new model deployment requires a full recertification cycle, turning continuous improvement into a multi-month waterfall.

The China Factor and the Framing Risk

The OEMs pushing hardest on AI-as-foundation are Chinese. Li Auto, Xpeng, Geely, Zeekr, NIO, Chery, and Leapmotor are all Snapdragon Digital Chassis design wins. Leapmotor’s D19 will be the first mass-production vehicle on a dual Snapdragon Elite architecture. These companies are not debating whether the vehicle should be AI-defined. They are shipping it. Meanwhile, several Western OEMs have restructured or scaled back their dedicated software organizations after years of underdelivery.

This creates a dangerous framing risk. If Western OEMs adopt AIDV as the new aspirational target before fully executing their SDV strategies, they risk jumping to the next buzzword cycle without resolving the architectural debt from the current one. You cannot leapfrog to AI-defined if your vehicle still runs 120 monolithic ECUs on legacy buses. The sequence matters: hyperconverge first, containerize second, then layer AI.

The Practitioner’s Verdict

AIDV is not a new architecture. It is the intelligence layer that a properly executed SDV architecture enables. The distinction matters because it determines where you invest. Treat AIDV as a separate paradigm and you risk bifurcating your platform—maintaining one stack for “SDV features” and bolting on another for “AI features.” Treat it as the natural maturation of SDV and you invest in making the platform AI-native from the ground up: compute headroom for inference, mixed-criticality orchestration, containerized workloads, knowledge graphs for context and intent, shadow-mode validation, and safety frameworks for probabilistic behavior.

My assessment: the transition is real, but the discontinuity is manufactured. The engineering work is in the execution—hyperconverged architectures, containerized microservices, context-aware knowledge graphs, shadow-mode validation—not in the declaration of a new era. And the only post-sale revenue proof point in the industry—Tesla FSD at $1.3 billion annually—was built on exactly this foundation: a vehicle that learns from its own use, deployed on a platform that can safely run experimental workloads alongside production systems. The companies that will lead are those that stopped relabeling and started shipping. Right now, that means looking East.