AgiBot's Real Breakthrough Isn't the Robot

How UWB became the safety and memory layer the humanoid stack was quietly missing.

AgiBot just declared 2026 "Deployment Year One" at its Shanghai partner conference, rolled out five new robot platforms, eight foundation models, and the 10,000th unit off the line. The YouTube transcript circulating the announcement is breathless and mostly right on the facts. But it misses where the real signal lives. The news isn't that A3 looks elegant or that Omnihand 3 has twenty-two degrees of freedom. The news is that AgiBot has publicly committed to the shape of the humanoid stack — and that shape is converging, fast, with what DeepMind, NVIDIA, and Physical Intelligence have all been quietly building.

That shape has four layers that are finally snapping into place: a disaggregated brain, a deployment-state data flywheel, cross-embodiment transfer, and — the one almost nobody is talking about — an external, metric, persistent memory substrate. The last one is being done in wireless. Specifically, in ultra-wideband. That is the punchline of this piece.

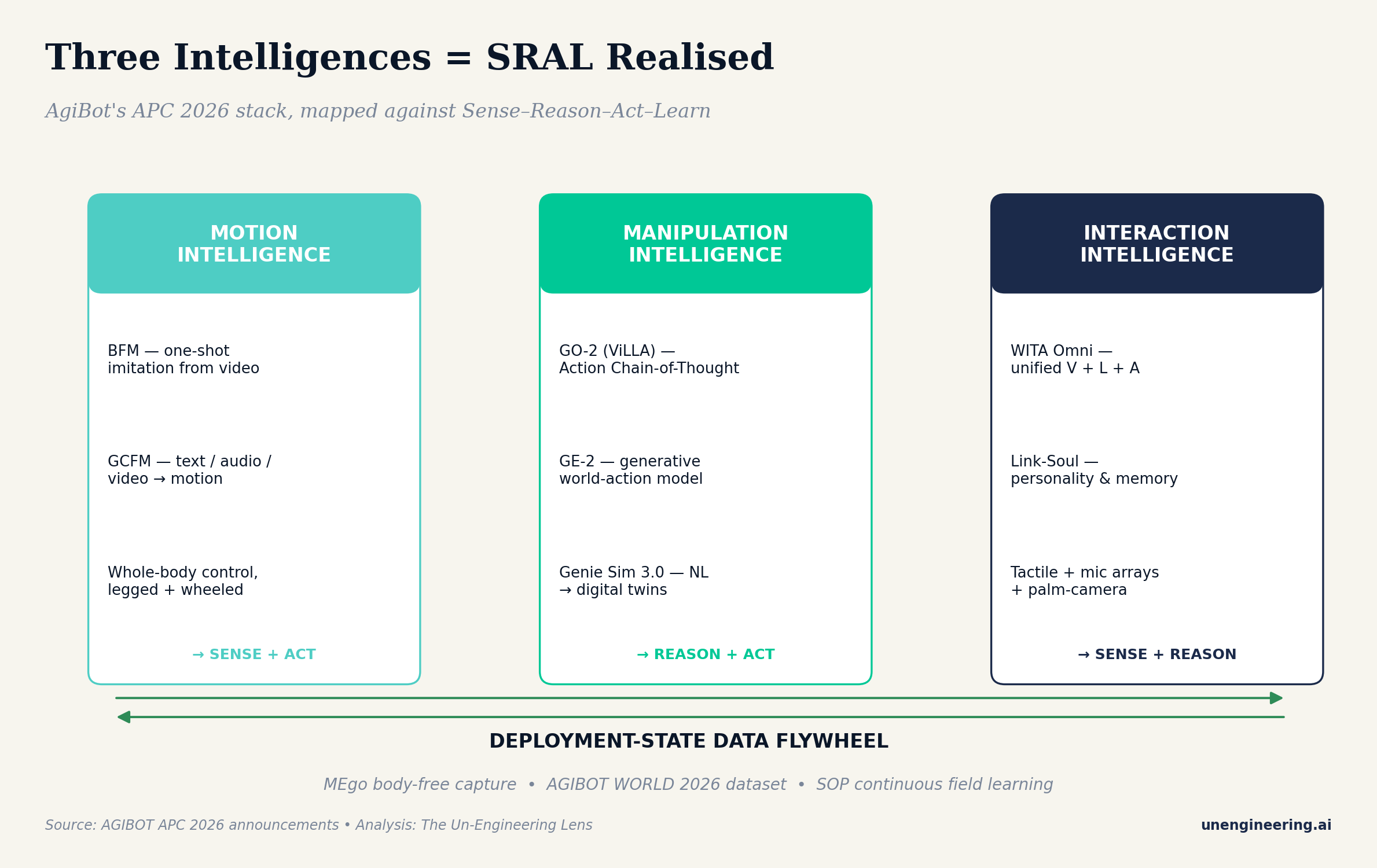

One Body, Three Intelligences — or SRAL by another name

AgiBot's framing — "one robotic body, three intelligences" covering motion, manipulation, and interaction — is the cleanest public articulation of embodied AI disaggregation any vendor has shipped. It is functionally identical to Sense–Reason–Act–Learn, the frame I have been using for four years on these pages. Motion intelligence is Sense + Act (BFM imitating from a single video, GCFM turning speech into gait). Manipulation intelligence is Reason + Act (GO-2 using action chain-of-thought, GE-2 as a generative world model, Genie Sim 3.0 turning natural language into digital twins). Interaction intelligence is Sense + Reason (WITA Omni unifying vision, language, and audio; Link-Soul providing personality and persistent on-device memory).

The interesting part isn't that AgiBot drew the picture. It is that DeepMind's Gemini Robotics-ER 1.6 (released 14 April), NVIDIA's GR00T N1.7 (Cosmos-Reason2 backbone, also this month), and Physical Intelligence's π0.7 (released days before APC) all sit in the same three boxes. A high-level embodied reasoner feeds a vision-language-action model, which is trained against an increasingly generative world simulator, with memory held outside the pure policy. The vendors use different names. The architecture is identical.

What AgiBot adds that the Western labs do not yet talk about openly is MEgo: body-free multimodal data capture. Humans wear the gripper; humans wear the camera; humans walk the factory floor. The robot is not in the loop for data collection. That is the correct move and it is the one most seasoned CTOs will steal immediately, because it breaks the most pernicious coupling in robotics: that you can only generate training data as fast as you can build robots.

The layer everyone skimmed past

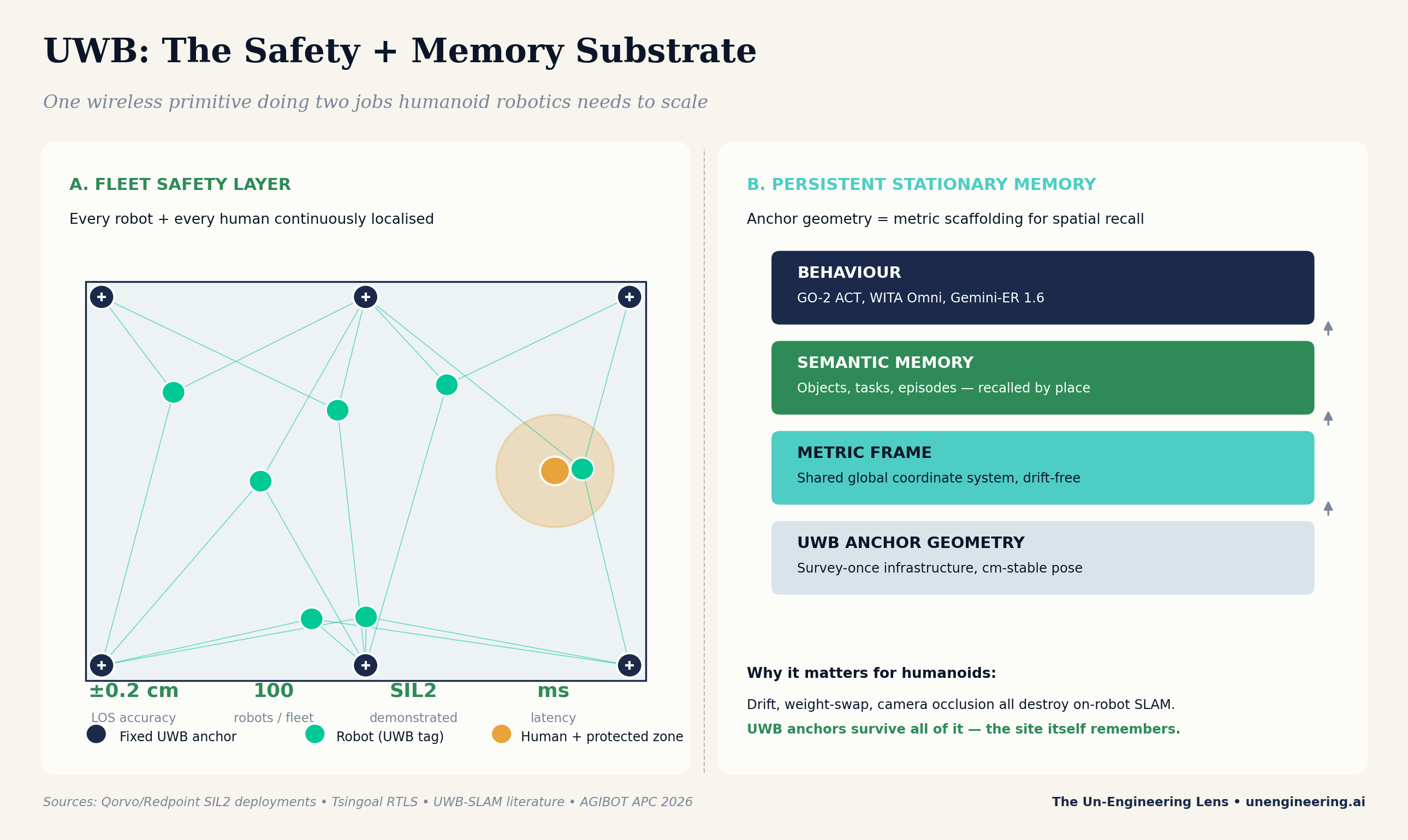

Midway through the A3 spec sheet is a sentence the transcript's author recited without commentary: A3 uses ultra-wideband positioning to synchronise up to one hundred robots at centimetre-level accuracy. That is not a footnote. That is the most important engineering decision AgiBot announced.

UWB is one wireless primitive doing two jobs humanoid robotics desperately needs done: functional safety and persistent spatial memory.

Here is what makes it unusual. Ultra-wideband radios emit sub-nanosecond pulses across a very wide slice of spectrum (typically 500 MHz to 7.5 GHz). Time-of-flight between a moving tag and a fixed anchor is measurable to roughly 0.2 centimetres in line-of-sight conditions, and realistic industrial deployments land at ten to thirty centimetres through occlusion and multipath — consistently, at millisecond latency, without the drift that plagues IMU dead-reckoning and without the light-sensitivity that breaks visual SLAM.

Job one — a wireless functional safety harness

Industrial AGV fleets already use this. Qorvo's UWB silicon, paired with Redpoint Positioning's real-time location system, runs SIL2-certified dynamic safety zones in high-volume warehouses today. Every robot wears a tag; every human wears a tag; when a human steps into a protected volume around a forklift or a picking arm, only the machines within that volume stop or slow down — not the entire fleet. In China, Tsingoal has rolled the same architecture into mining, factory automation, and the first humanoid demonstrations.

Porting this to humanoids is a categorical upgrade, not an incremental one. Today's humanoid safety story is a camera-based collision estimator, an emergency-stop button, and a lot of hope. ISO 25785-1, the emerging Type C standard for mobile manipulators with actively controlled stability that Boston Dynamics and Agility Robotics are co-chairing, is being written precisely because camera-only safety does not meet the bar for humans sharing a shop floor with a 55-kilogram biped moving at 1.5 metres per second.

UWB fills the gap. It runs as an independent redundancy channel that survives the failure modes of the primary perception stack — darkness, fog, glare, lens contamination, moving shadow. In the Four-Layer Redundancy Model I use for autonomous stacks, UWB sits in Layer 3 (independent localisation of ego, co-workers, and co-robots) with a credible Layer 4 story (enforce a hard geofence even if higher layers are lying). That is where SIL2 lives.

Job two — persistent stationary memory, for free

The second job is the one I find more intellectually exciting. A UWB anchor network, once installed and surveyed, provides a drift-free metric coordinate frame that is literally bolted to the building. Every robot that enters the space inherits a shared global frame without any SLAM bootstrapping, without relocalisation jitter, without the long tail of failure cases that eat production uptime when lighting changes.

That matters because the generalisation frontier in robotics foundation models has now reached memory. Physical Intelligence named its newest capability MEM — Multi-Scale Embodied Memory — and positions it as the substrate that lets a policy run tasks longer than ten minutes. NVIDIA's GR00T-Perception workflow bakes in retrieval-augmented memory for "long-term memory of events, spaces, personalised settings, and context-aware responses". Gemini Robotics-ER 1.6 reports state-of-the-art spatial reasoning benchmarks because it is being fine-tuned on spatially anchored queries — the "where did I last see the pliers" class of problem.

All three of those memory systems need a metric frame that survives across sessions and across robots. You can get that from heroic visual SLAM, which breaks in the real world. You can get it from dense Lidar and HD maps, which costs a fortune to keep current. Or you can get it for roughly the price of a dozen battery-powered anchors screwed to a wall. The site becomes the memory. The robot becomes a tenant.

What the four-way convergence means

Step back from the announcements and the pattern is unmistakable. Four independent leaders — AgiBot, Google DeepMind, NVIDIA, Physical Intelligence — have in the past six weeks converged on the same four-layer architecture. A high-level reasoner separated from a vision-language-action policy. A generative world-simulator feeding synthetic data into the flywheel. An external persistent memory. And, underneath everything, a deployment-state learning loop that treats the field as the training environment.

The competitive edge now lives in the infrastructure decisions each vendor has not yet made public. Where does the metric frame come from? Who owns the data flywheel once the robot is deployed in a customer's plant? How do you meet the safety bar the insurers are about to demand? AgiBot has answered the first question loudly by building UWB coordination into A3's base SKU. My read is that the Western labs will follow within two quarters, because there is no cheaper way to hit SIL2 and there is no simpler way to ground a cross-session memory.

If your autonomy stack works in Bengaluru, it works anywhere. And if your autonomy stack has no external metric memory in 2026, it doesn't work at scale anywhere.

The three things to do on Monday morning

For any enterprise thinking about physical AI procurement in the next eighteen months, three practical implications fall out of this analysis. First, insist that vendors expose their disaggregation. If a vendor is selling you a monolithic policy, they are shipping the 2024 architecture and will be re-platformed by 2027. Ask where reasoning, action, and memory live separately.

Second, treat the site infrastructure as part of the robot. Budget for UWB anchor deployment and survey as a line item in any humanoid pilot; it is cheaper than the alternative and it will outlive the first two generations of robot hardware. Your industrial engineers have done this before for asset tracking — it is the same bill of materials.

Third, ask for the data flywheel. A vendor that cannot tell you how deployment data flows back into model improvement is selling you a snapshot of a model, not a product. AgiBot's MEgo, NVIDIA's Cosmos, and Physical Intelligence's co-training recipes all assume this loop is closed. If your vendor's loop is open, you are funding someone else's training set without the benefit.

The humanoid bubble discourse is the wrong debate. The question is not whether 2026 robots can dance. They can. The question is whether your operations team can run a hundred of them through a night shift without stopping the line every time a human walks past, and whether those hundred robots remember today what they learned yesterday. The answer to both, increasingly, is wireless.

The Un-Engineering Lens is a weekly column by Thomas Mueller on the architectures that make Physical AI deployable at scale. Views are the author's own.